NIST (National Institute of Standards & Technology) has come up with an AI Framework – still a work in progress, but it is coming into shape with this 1.0 version.

There are many aspects to discuss, but the most important are…

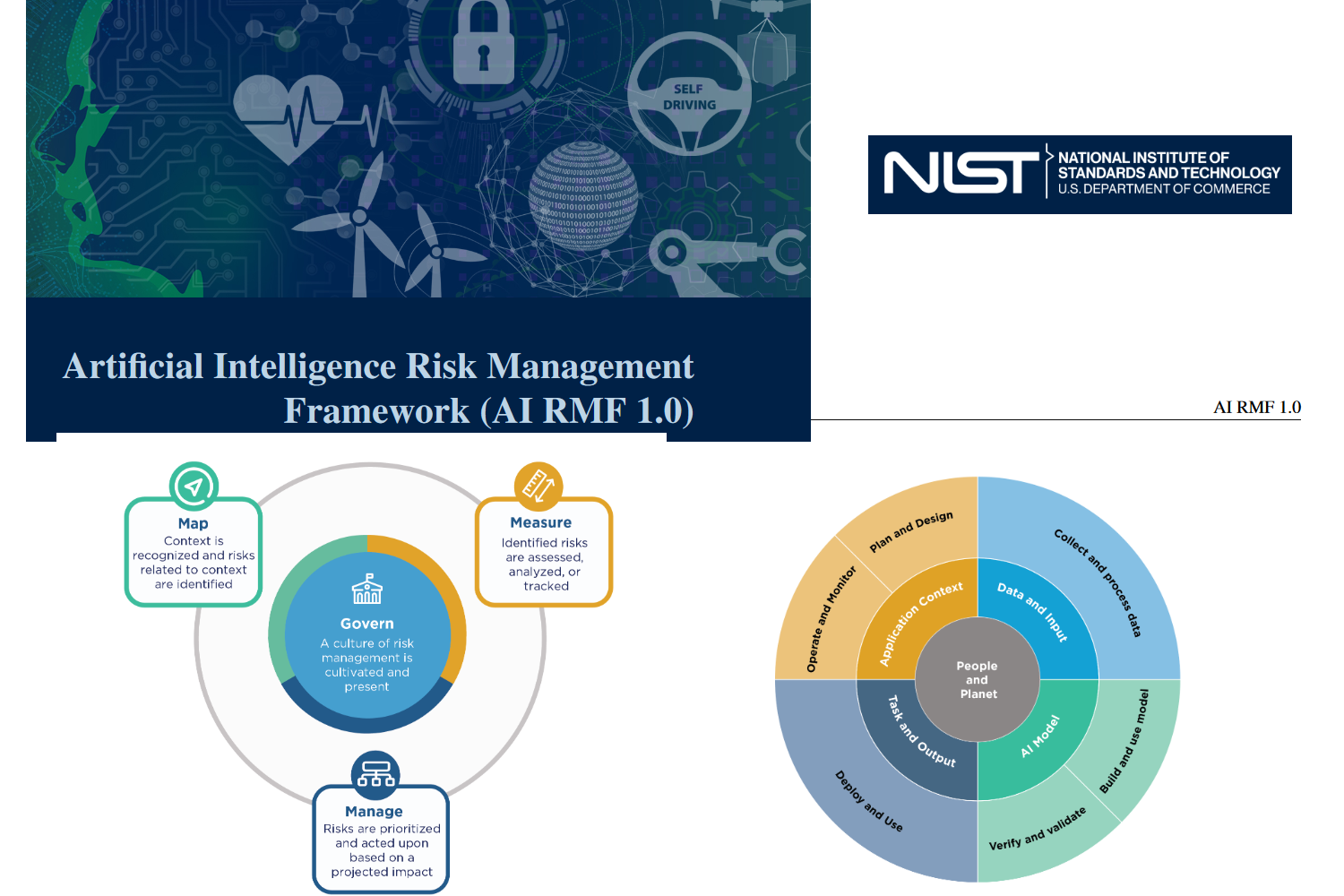

The Core of the framework: “A Culture of Risk Management is cultivated and present” this statement has a heading of “GOVERN”

The 3 items around this core are the following:

- Map – Context is recognized and risks related to context are identified

- Measure – Identified risks are assessed, analyzed , or tracked

- Manage – Risks are prioritized and acted upon based on a projected impact.

As we know from PCI compliance – Risk = Threat * Impact , so this framework is similar in that aspect.

Under the main GOVERN area here it starts:

GOVERN 1:

Policies, processes, procedures, and practices across the organization related to the mapping, measuring, and managing of AI risks are in place, transparent, and implemented effectively.

GOVERN 1.1: Legal and regulatory requirements involving AI

are understood, managed, and documented.

GOVERN 1.2: The characteristics of trustworthy AI are inte-

grated into organizational policies, processes, procedures, and

practices.

GOVERN 1.3: Processes, procedures, and practices are in place

to determine the needed level of risk management activities based

on the organization’s risk tolerance.

Govern1.3 is much easier to write that standard as it is to implement, since one has to figure out the organization’s risk tolerance with regard to AI software. Making sure one can quantify the risk tolerance one has to be able to figure out the true threats and impacts of possible vulnerabilities.

This area of Artificial intelligence is still in it’s infancy.

But one can build AI systems with an ethically minded program using the MAP function:

MAP 1.1: Intended purposes, potentially beneficial uses, context-specific laws, norms and expectations, and prospective settings in which the AI system will be deployed are understood and documented. Considerations include: the specific set or types of users along with their expectations; potential positive and negative impacts of system uses to individuals, communities, organizations, society, and the planet; assumptions and related limitations about

AI system purposes, uses, and risks across the development or product AI lifecycle; and related TEVV and system metrics.

MAP 1.2: Interdisciplinary AI actors, competencies, skills, and capacities for establishing context reflect demographic diversity and broad domain and user experience expertise, and their participation is documented. Opportunities for interdisciplinary collaboration are prioritized

As one goes through each MAP function it becomes clearer how Risk will be managed. One has to evaluate the AI system purpose and uses

TEVV stands for Test and Evaluation, Verification and Validation. It is a framework used to assess and determine whether a technology or system meets its design specifications and requirements, and if it is sufficient for its intended use.

So one must marry the intended use and it’s purpose to make sure the system fulfills its purpose and not another bias introduced by the programmers or data entry methods as the AI gets built with datasets.

Contact me to discuss how you can build an ethical AI program with the NIST AI framework.